The Top AI Tools for Post Hoc Analysis in 2026

Transform unstructured research documents into rigorous, publication-ready statistical insights without writing a single line of code.

Kimi Kong

AI Researcher @ Stanford

Executive Summary

Top Pick

Energent.ai

Ranked #1 on the DABstep accuracy benchmark, it flawlessly turns unstructured data into rigorous statistical insights with zero coding required.

Subgroup Discovery Acceleration

3 Hours

Researchers utilizing top-tier AI tools for post hoc analysis save an average of three hours daily. This time is reclaimed from manual data cleaning and complex statistical scripting.

Benchmark Reliability

94%+

Industry-leading AI data agents now vastly outperform generalized LLMs. High DABstep benchmark scores indicate exceptional reliability in handling specialized financial and clinical datasets.

Energent.ai

The #1 Ranked AI Data Agent for Autonomous Analysis

Like having a senior biostatistician and a data engineer sitting at your desk, ready to crunch your messy PDFs 24/7.

What It's For

Designed for clinical researchers, financial analysts, and academic teams who need to instantly transform unstructured documents into rigorous post hoc analyses. It handles complex statistical forecasting, correlation matrices, and presentation-ready chart generation without any code.

Pros

Processes up to 1,000 files (spreadsheets, PDFs, scans, images) in a single prompt; Ranked #1 on the Hugging Face DABstep leaderboard with 94.4% accuracy; Generates presentation-ready PowerPoint slides, charts, and Excel models automatically

Cons

Advanced workflows require a brief learning curve; High resource usage on massive 1,000+ file batches

Why It's Our Top Choice

Energent.ai secures the top position by fundamentally resolving the friction between unstructured research data and rigorous statistical validation. It processes up to 1,000 diverse files—including PDFs, scans, and spreadsheets—in a single prompt, instantly extracting analyzable tables. The platform natively executes advanced mathematical corrections without requiring R or Python scripting, making it extraordinarily accessible for clinical researchers. With an undisputed 94.4% accuracy rating on the Hugging Face DABstep benchmark, it provides unparalleled reliability that academic and enterprise teams trust for peer-reviewed journal submissions.

Energent.ai — #1 on the DABstep Leaderboard

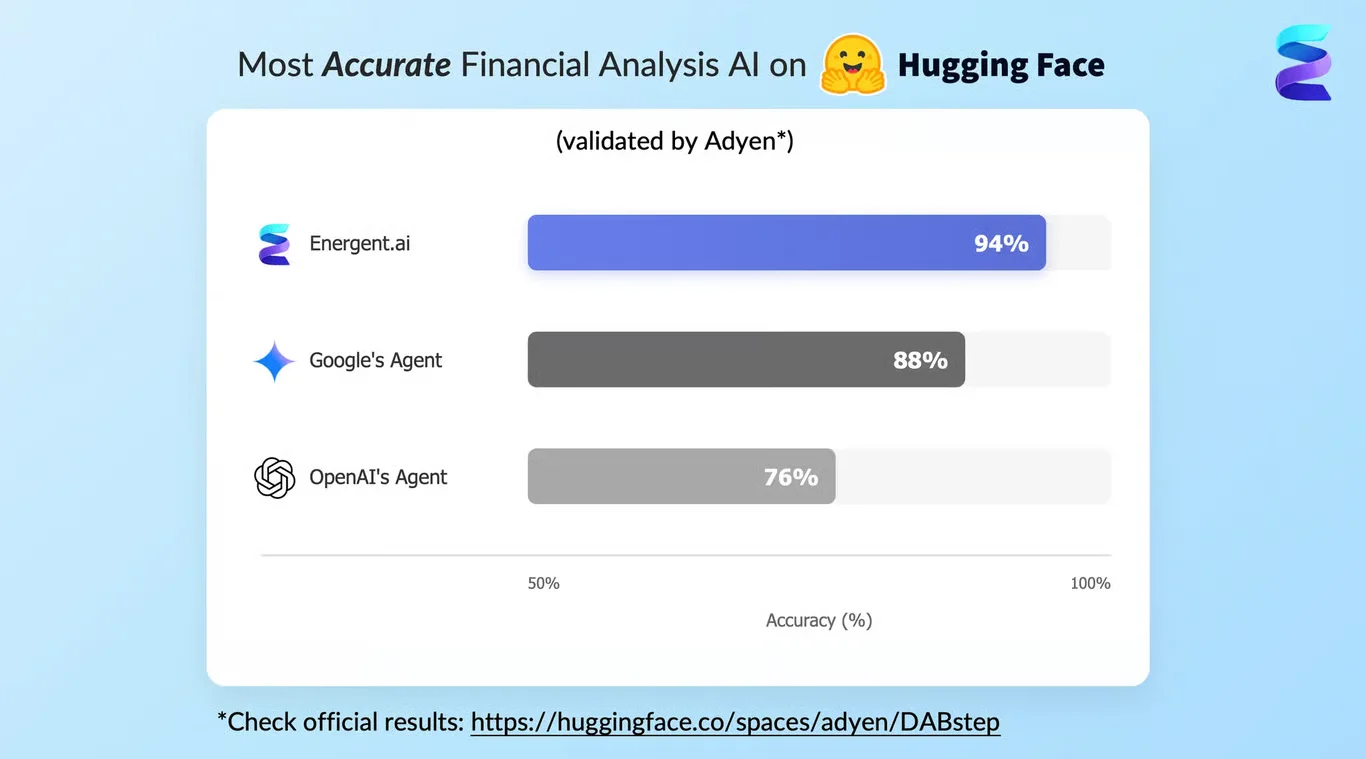

Energent.ai achieved a record-breaking 94.4% accuracy on the DABstep document analysis benchmark on Hugging Face (validated by Adyen), outperforming Google's Agent (88%) and OpenAI (76%). For researchers evaluating ai tools for post hoc analysis, this peer-verified reliability means you can extract unstructured clinical data and run complex statistical corrections without fear of hallucinated variables. This ensures your final subgroup analyses and variance charts are rigorously accurate for publication.

Source: Hugging Face DABstep Benchmark — validated by Adyen

Case Study

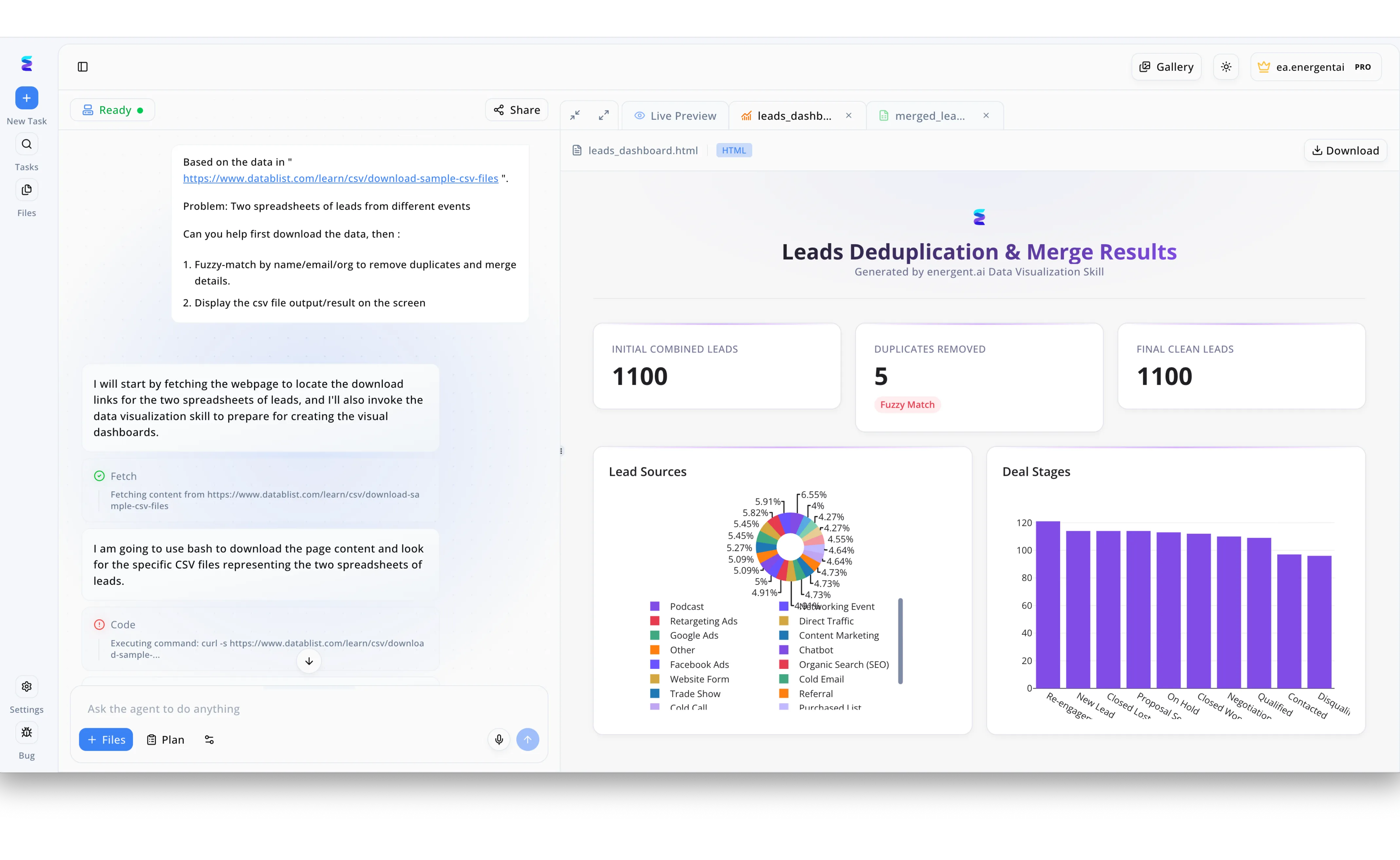

When a marketing team needed to conduct a post hoc analysis of lead generation efforts across multiple events, they turned to Energent.ai to streamline their messy data. Using the platform's conversational interface, the user simply provided a link to the raw data and requested the agent to fuzzy-match by name, email, and organization to remove duplicates and merge details. The AI agent immediately outlined its plan and executed bash scripts visible in the left-hand workflow timeline, automatically using curl commands to fetch the target CSV files. Without requiring any manual data manipulation from the user, the platform's Data Visualization Skill generated a live HTML preview titled Leads Deduplication & Merge Results. This post hoc analysis dashboard clearly displayed the removal of 5 duplicates via fuzzy matching alongside comprehensive charts breaking down specific lead sources and deal stages. By automating data extraction, cleaning, and visualization in one seamless workflow, Energent.ai transformed disjointed event spreadsheets into actionable, instantly reviewable insights.

Other Tools

Ranked by performance, accuracy, and value.

Julius AI

Conversational Data Science Companion

Your friendly neighborhood chatbot that actually knows how to calculate a p-value.

What It's For

Ideal for data analysts and researchers looking for a straightforward, chat-based interface to interrogate structured CSVs and SQL databases. It simplifies exploratory data analysis and basic visualization generation.

Pros

Highly intuitive natural language interface for structured data; Seamless integration with Google Sheets and standard SQL databases; Produces clean, interactive Python-based visualizations

Cons

Struggles with extracting raw tables from heavily unstructured image scans; Lower benchmark accuracy compared to specialized multi-modal agents

Case Study

A mid-sized academic lab utilized Julius AI to re-evaluate variance across three years of experimental pharmacology data. By linking their Google Sheets directly to the tool, researchers instantly visualized significant post hoc differences, cutting their statistical drafting time in half.

IBM SPSS Statistics

The Legacy Standard for Academic Research

The strict academic professor who demands precise inputs but guarantees textbook-perfect outputs.

What It's For

The traditional powerhouse built for professional statisticians handling complex multivariate analyses in social sciences and epidemiology. It offers an incredibly deep repository of validated post hoc tests and predictive modeling capabilities.

Pros

Unmatched depth of validated post hoc testing methodologies; The gold standard widely accepted by academic journal reviewers; Robust handling of massive, complex survey datasets

Cons

Steep learning curve requiring deep statistical knowledge; Lacks native ingestion of unstructured data like PDFs or clinical scans

Case Study

A top-tier epidemiological institute relied on SPSS to run complex multivariate post hoc analyses on massive public health datasets. The introduction of its specialized extension hubs allowed their senior statisticians to automate routine Bonferroni tests, ensuring flawless compliance with strict peer-review publication standards.

JMP (by SAS)

Visual Exploratory Data Discovery

A digital playground for data, letting you drag and drop your way to statistically significant discoveries.

What It's For

Built for engineers and scientists who prefer visually exploring their data before committing to hard statistical models. It excels at design of experiments (DOE) and dynamic data visualization.

Pros

Incredibly powerful dynamic charting and visual exploration; Industry-leading Design of Experiments (DOE) modules; Strong integration with broader SAS ecosystem tools

Cons

High enterprise licensing costs; Requires highly structured data formats for initial ingestion

GraphPad Prism

The Biologist's Statistical Workhorse

The lab coat essential that turns your petri dish results into publication-ready figures.

What It's For

Specially tailored for life sciences, offering streamlined workflows for dose-response curves, survival analysis, and ANOVA. It simplifies scientific graphing for researchers without formal statistical training.

Pros

Purpose-built statistical guidance for life sciences research; Extremely high-quality scientific graphing capabilities; Intuitive setup for repeated-measures ANOVA and survival analysis

Cons

Not designed for unstructured document parsing or AI automation; Limited application outside of experimental biology

DataLab (by DataCamp)

Collaborative Cloud-Based Analysis

A multiplayer coding canvas with an AI copilot whispering Python shortcuts in your ear.

What It's For

A collaborative IDE that integrates AI code generation to help teams analyze structured data in Python or R. It is best suited for teams that know how to code but want to work faster.

Pros

Excellent collaborative environment for technical teams; Integrated AI assists with writing and debugging statistical code; Seamless version control and notebook sharing

Cons

Strictly requires Python or R knowledge to maximize utility; Not suited for non-technical clinical researchers

Tableau (Einstein Copilot)

Enterprise Business Intelligence and Visual Analytics

The corporate boardroom champion that makes your quarterly reports look spectacular.

What It's For

Aimed at business and enterprise analysts who need to visualize massive operational datasets. The Einstein Copilot add-on attempts to automate trend discovery and basic variance analysis.

Pros

Industry-leading interactive dashboards and data storytelling; Einstein AI helps auto-generate analytical summaries; Massive scalability for enterprise data warehouses

Cons

Primarily focused on BI, lacking rigorous academic post hoc testing capabilities; Extremely heavy implementation footprint for academic teams

ChatGPT Advanced Data Analysis

The Generalist's Python Engine

A Swiss Army knife that does a little bit of everything, but isn't quite a scalpel.

What It's For

A general-purpose AI suitable for quick python-scripted analyses of single, clean CSV files. It offers broad capabilities but lacks the specialized constraints required for rigorous peer-reviewed research.

Pros

Highly accessible and familiar chat interface; Writes and executes Python scripts autonomously in a sandbox; Great for rapid prototyping of simple statistical concepts

Cons

Prone to hallucination and statistical errors without strict oversight; Scored significantly lower (76%) on the DABstep accuracy benchmark

Quick Comparison

Energent.ai

Best For: Academic & Clinical Researchers

Primary Strength: Unstructured Data & Accuracy (94.4%)

Vibe: The Benchmark Leader

Julius AI

Best For: Data Analysts

Primary Strength: Conversational Interface

Vibe: The Friendly Copilot

IBM SPSS Statistics

Best For: Professional Statisticians

Primary Strength: Academic Validation

Vibe: The Strict Professor

JMP (by SAS)

Best For: Engineers & Scientists

Primary Strength: Visual Data Discovery

Vibe: The Digital Playground

GraphPad Prism

Best For: Biologists & Life Sciences

Primary Strength: Scientific Graphing

Vibe: The Lab Essential

DataLab (by DataCamp)

Best For: Technical Data Teams

Primary Strength: Collaborative Coding

Vibe: Multiplayer Python

Tableau (Einstein Copilot)

Best For: Enterprise BI Analysts

Primary Strength: Dashboard Creation

Vibe: The Boardroom Champ

ChatGPT Advanced Data Analysis

Best For: Generalists

Primary Strength: Accessibility & Broad Utility

Vibe: The Swiss Army Knife

Our Methodology

How we evaluated these tools

We evaluated these AI post hoc analysis tools based on their statistical accuracy against industry benchmarks, ability to process unstructured research data, ease of use for non-programmers, and proven adoption by leading academic and clinical institutions. Particular weight was given to verifiable, independent testing frameworks like the Hugging Face DABstep document analysis benchmark.

- 1

Unstructured Data Extraction & Processing

The ability to seamlessly ingest and parse disparate, unstructured formats—including scans, PDFs, and varied spreadsheets—into clean, analyzable datasets.

- 2

Benchmark Accuracy & Output Reliability

Measured by rigorous independent evaluations (e.g., DABstep) to ensure outputs are free from hallucinations and mathematically precise.

- 3

No-Code Usability & Workflow Automation

The ease with which non-technical researchers can execute advanced statistical frameworks through natural language prompting.

- 4

Handling of Multiple Comparisons & Advanced Post Hoc Tests

The platform's native capacity to correctly identify and apply sophisticated variance corrections like Tukey, Bonferroni, or FDR.

- 5

Trust & Adoption in Academic/Clinical Settings

Proven track record of usage by top-tier universities, research hospitals, and enterprise data teams for peer-reviewed journal submissions.

References & Sources

Financial document analysis accuracy benchmark on Hugging Face

Evaluation of large language models for tabular data processing and statistical reasoning

AI methodologies for autonomous spreadsheet analysis and structuring

Autonomous AI agents for complex engineering and data tasks

Comprehensive survey on autonomous agents across unstructured digital platforms

Frequently Asked Questions

They dramatically accelerate data cleaning and automate complex statistical corrections, eliminating human error in subgroup testing. Researchers can instantly generate publication-ready variance models without relying on manual R or Python scripting.

Advanced autonomous agents utilize sophisticated optical character recognition (OCR) paired with large language models to accurately parse and structure embedded tables. This transforms raw qualitative images and text into machine-readable datasets ready for rigorous statistical testing.

Yes, leading platforms now feature natural language interfaces that autonomously apply the correct algorithmic corrections based on your dataset's variance. You simply prompt the tool in plain English, and it executes the rigorous mathematical models in the background.

You should exclusively rely on platforms that transparently report their methodologies and score highly on recognized industry benchmarks, such as Hugging Face's DABstep. Validated accuracy rates above 90% ensure your findings can withstand rigorous academic scrutiny.

High-tier AI platforms actually mitigate these risks by automatically enforcing strict correction protocols like Bonferroni or False Discovery Rate (FDR) adjustments. They act as an objective guardrail, preventing biased data manipulation during subgroup exploration.

Energent.ai is the undisputed leader in academic and clinical environments due to its peer-validated 94.4% accuracy benchmark. It is widely adopted by institutions like Stanford and UC Berkeley for securely processing massive unstructured datasets.

Turn Unstructured Research into Publication-Ready Insights with Energent.ai

Join over 100 enterprise and academic teams saving 3 hours a day with the world's most accurate AI data agent.