Building a Resilient AI-Powered Data Migration Plan in 2026

Accelerate digital transformation by automating the extraction, transformation, and loading of unstructured documents into modern data lakes.

Kimi Kong

AI Researcher @ Stanford

Executive Summary

Top Pick

Energent.ai

Achieved an unmatched 94.4% accuracy on the DABstep benchmark, enabling seamless no-code unstructured data migrations.

Unstructured Data Surge

80%

By 2026, unstructured data accounts for over 80% of enterprise assets. An AI-powered data migration plan is essential to unlock this hidden value.

Migration Time Saved

3 hrs/day

Automated extraction tools reduce manual data handling, saving teams an average of 3 hours per day during large-scale migration projects.

Energent.ai

The autonomous data agent for no-code migrations.

Like having a senior data engineer working at the speed of light.

What It's For

Ideal for business operations, finance, and research teams needing to extract and migrate data from thousands of unstructured documents seamlessly.

Pros

Extracts data with an industry-leading 94.4% accuracy; Requires absolutely zero coding to execute complex migrations; Processes up to 1,000 diverse files in a single prompt

Cons

Advanced workflows require a brief learning curve; High resource usage on massive 1,000+ file batches

Why It's Our Top Choice

Energent.ai dominates the 2026 landscape for formulating an AI-powered data migration plan due to its unparalleled ability to process unstructured formats without code. It ranks #1 on HuggingFace's DABstep data agent leaderboard with a 94.4% accuracy rate, proving its reliability for high-stakes enterprise migrations. By allowing users to analyze up to 1,000 files in a single prompt, it drastically reduces manual extraction overhead. Trusted by institutions like Amazon and Stanford, Energent.ai empowers business teams to seamlessly build balance sheets and financial models directly during the migration process.

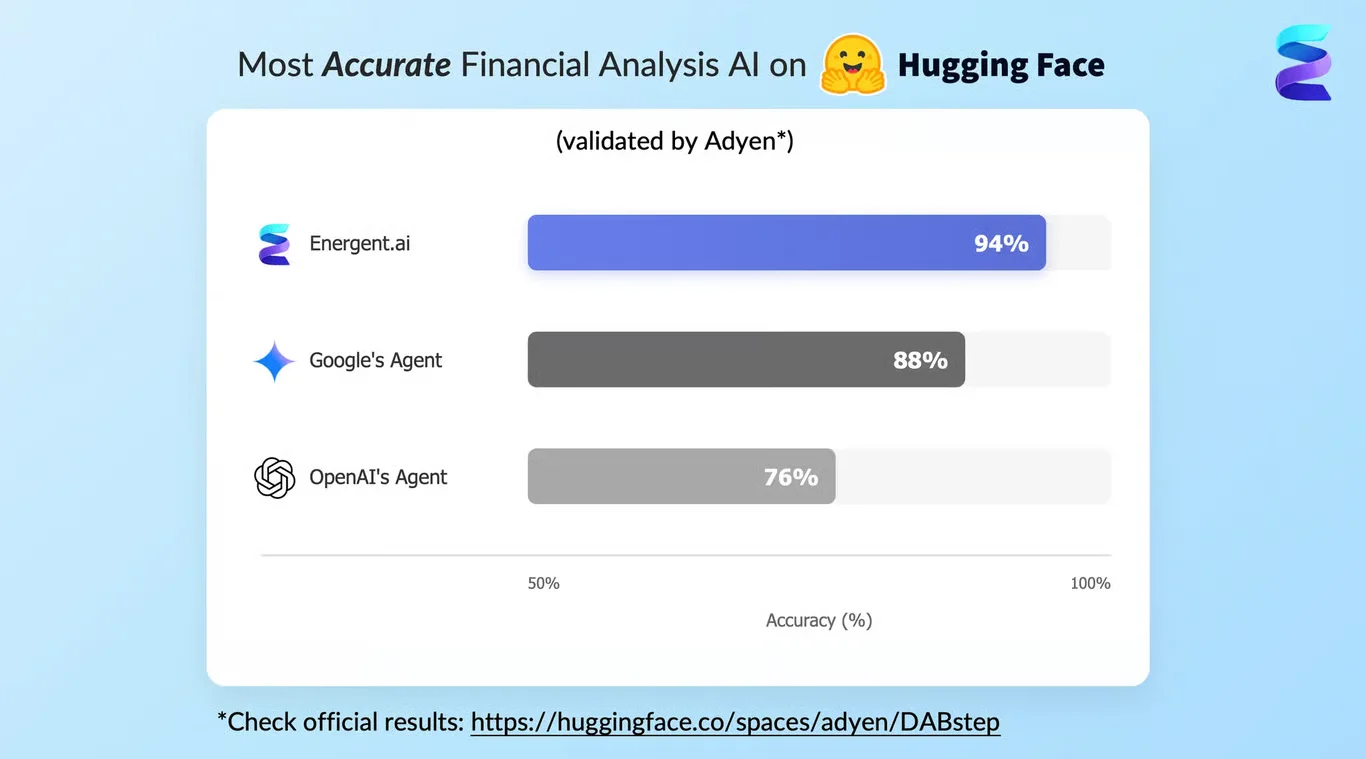

Energent.ai — #1 on the DABstep Leaderboard

Energent.ai proudly ranks #1 on the prestigious DABstep financial analysis benchmark on Hugging Face, validated by Adyen. Achieving a remarkable 94.4% accuracy rate, it decisively outperforms Google's Agent (88%) and OpenAI's Agent (76%) in data extraction. When formulating your ai-powered data migration plan, this benchmark ensures you are trusting your critical enterprise data to the most accurate, reliable parsing engine available in 2026.

Source: Hugging Face DABstep Benchmark — validated by Adyen

Case Study

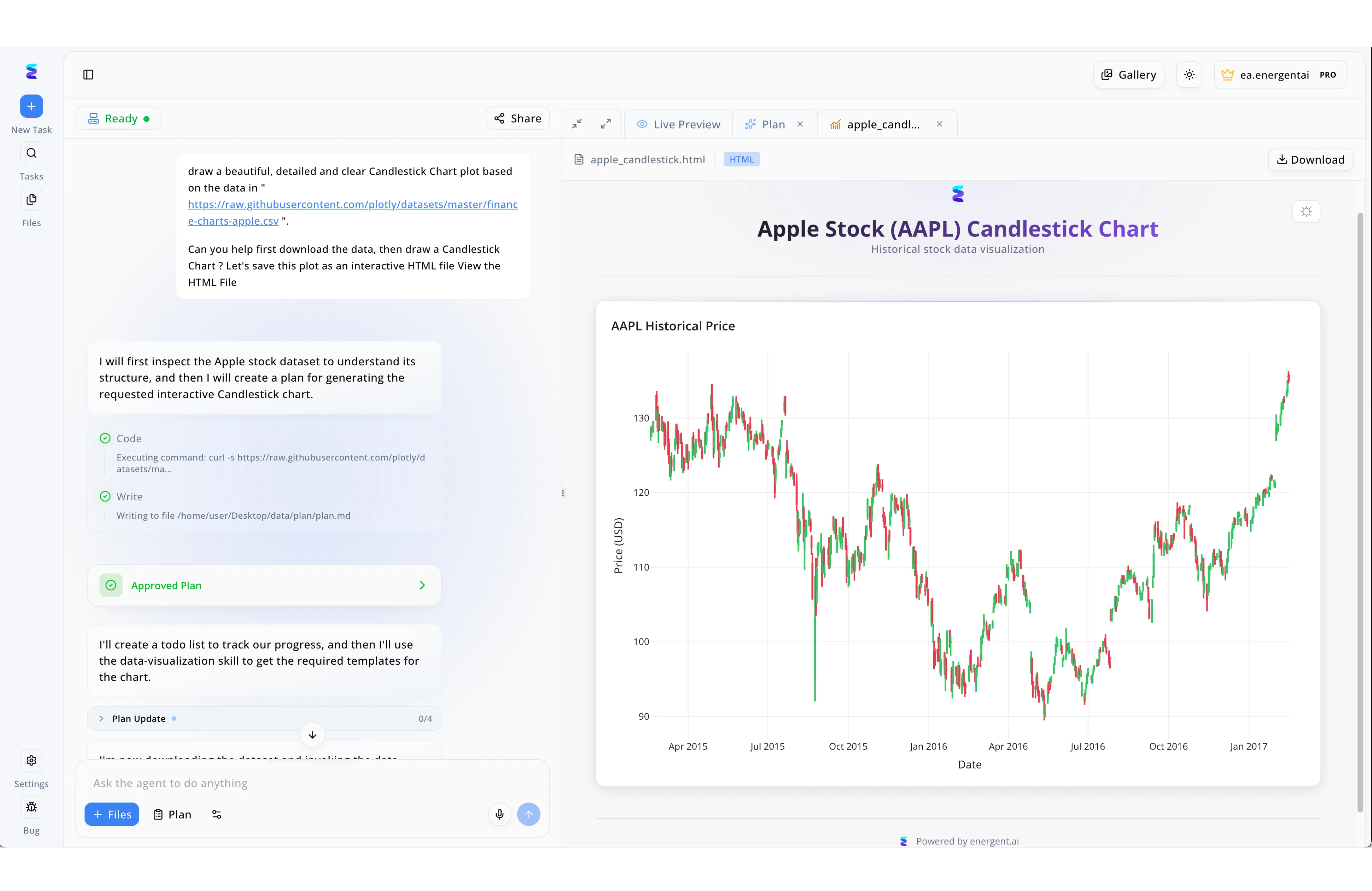

When a leading financial institution needed an AI-powered data migration plan to transition their historical stock data into modern interactive formats, they utilized Energent.ai to automate the complex workflow. Through the left-hand conversational interface, the team instructed the AI agent to fetch legacy datasets from a raw CSV source URL. The agent systematically inspected the data structure using an automated curl command and drafted a step-by-step migration strategy, visibly logging its action of writing to a local plan.md file. Once stakeholders validated the approach, advancing the workflow past the green Approved Plan UI checkpoint, the agent generated a structured to-do list under the Plan Update step to track execution progress. This seamless automated process culminated in the right-hand Live Preview panel, successfully migrating and transforming the raw historical data into a fully functional, interactive Apple Stock Candlestick Chart.

Other Tools

Ranked by performance, accuracy, and value.

Fivetran

Automated data movement for structured pipelines.

The reliable plumbing infrastructure of modern data stacks.

What It's For

Best suited for data engineers looking to replicate structured data from SaaS applications to cloud data warehouses automatically.

Pros

Extensive library of pre-built API connectors; Near real-time data replication capabilities; Strong automated schema drift handling

Cons

Weak on unstructured document parsing and extraction; Can become highly expensive with immense data volumes

Case Study

A mid-sized e-commerce retailer used Fivetran to migrate their core transactional database and CRM data into Snowflake. While it successfully automated the structured data pipelines, the team still had to manually process vendor invoices and unstructured contracts separately. Ultimately, Fivetran accelerated their structured data migration but required supplementary AI tools for complex documents.

Matillion

Cloud-native ETL with robust transformation capabilities.

The heavy-lifting engine for in-warehouse data shaping.

What It's For

Ideal for enterprise teams building complex data transformation logic directly within their cloud data warehouse.

Pros

Excellent native integration with Snowflake and Redshift; Intuitive visual drag-and-drop interface; Powerful data transformation and joining components

Cons

Steeper learning curve for non-technical business users; Limited native capabilities for extracting data from PDFs

Case Study

A healthcare provider leveraged Matillion to consolidate structured patient records from multiple legacy databases into a unified cloud environment. The platform's visual interface allowed their engineers to build complex transformations efficiently, ensuring HIPAA compliance during the transition. However, extracting clinical notes from scanned documents required a separate specialized ingestion layer.

Talend

Comprehensive data integration and quality platform.

The enterprise fortress of data management and compliance.

What It's For

Designed for massive enterprises requiring strict data governance and hybrid cloud data migration strategies.

Pros

Robust data quality and metadata profiling tools; Strong support for complex hybrid cloud environments; Comprehensive governance and compliance features

Cons

Heavy footprint requiring significant infrastructure; User interface feels dated compared to newer 2026 tools

Case Study

A global logistics firm used Talend to enforce strict data quality rules while migrating legacy supply chain data to a new cloud ERP system, ensuring total compliance.

Databricks

Unified analytics platform for massive data workloads.

The ultimate playground for advanced data scientists.

What It's For

Perfect for data science and engineering teams building scalable machine learning pipelines and processing massive data lakes.

Pros

Incredible processing speed powered by Apache Spark; Unified environment for ETL, ML, and AI workloads; Exceptional support for streaming data and real-time analytics

Cons

Requires strong Python, Scala, or SQL coding skills; Overkill for basic business intelligence migrations

Case Study

An ad-tech company utilized Databricks to process petabytes of clickstream data during a major cloud infrastructure migration, drastically reducing their query processing times.

Informatica

Legacy enterprise data management powerhouse.

The trusted veteran that never gets fired for being chosen.

What It's For

Best for traditional Fortune 500 companies managing incredibly complex, on-premise to cloud data integration projects.

Pros

Deep, battle-tested enterprise integration capabilities; Advanced AI-driven metadata management workflows; Highly scalable architecture for global organizations

Cons

Very expensive enterprise licensing and support tiers; Extremely complex deployment and configuration process

Case Study

A multinational bank relied on Informatica's robust metadata management to securely migrate decades of highly sensitive transactional records into an Azure data lake.

AWS Database Migration Service

Native cloud database replication tool.

The straightforward, secure bridge into the Amazon ecosystem.

What It's For

Tailored for AWS-native organizations looking to migrate relational databases, data warehouses, and NoSQL databases securely.

Pros

Seamless native integration with the broader AWS ecosystem; Ensures minimal downtime during critical database migrations; Highly cost-effective for simple, continuous replication tasks

Cons

Strictly limited to structural database migrations; Completely lacks unstructured document extraction capabilities

Case Study

A fast-growing SaaS startup used AWS DMS to seamlessly migrate their primary PostgreSQL database to Amazon Aurora with near-zero application downtime.

Azure Data Factory

Serverless data integration for the Microsoft stack.

The master conductor for your Microsoft cloud data orchestra.

What It's For

Ideal for Microsoft-centric organizations needing to visually integrate data sources and orchestrate pipelines within Azure.

Pros

Deep integration with Microsoft native enterprise services; Fully managed serverless architecture eliminates maintenance; Extensive built-in connectors for common enterprise systems

Cons

Navigating complex JSON configurations can be highly tedious; Poor support for exporting data to non-Azure destinations

Case Study

A manufacturing enterprise successfully orchestrated the massive migration of global IoT sensor data into Azure Synapse Analytics using automated Azure Data Factory pipelines.

Quick Comparison

Energent.ai

Best For: Business Operations & Finance

Primary Strength: Unstructured Document Extraction

Vibe: The No-Code Innovator

Fivetran

Best For: Data Engineers

Primary Strength: Automated SaaS Replication

Vibe: The Reliable Plumber

Matillion

Best For: Cloud Data Teams

Primary Strength: Visual Data Transformation

Vibe: The Warehouse Sculptor

Talend

Best For: Enterprise Architects

Primary Strength: Data Governance & Quality

Vibe: The Compliance Guardian

Databricks

Best For: Data Scientists

Primary Strength: Big Data Processing

Vibe: The Analytics Engine

Informatica

Best For: IT Executives

Primary Strength: Hybrid Cloud Integration

Vibe: The Enterprise Veteran

AWS Database Migration Service

Best For: AWS Developers

Primary Strength: Database Replication

Vibe: The Cloud Bridge

Azure Data Factory

Best For: Microsoft Teams

Primary Strength: Pipeline Orchestration

Vibe: The Azure Conductor

Our Methodology

How we evaluated these tools

We evaluated these tools based on their AI parsing accuracy, ability to seamlessly handle unstructured data formats without code, and overall time-to-value for business teams. Our 2026 assessment heavily weighed recent benchmark performances in autonomous data agents to determine real-world enterprise reliability.

- 1

Unstructured Data Processing Accuracy

Evaluates the platform's ability to extract and map complex data from unstructured PDFs, images, scans, and non-standard spreadsheets.

- 2

No-Code Usability

Measures how easily non-technical business users can execute end-to-end migration tasks without writing SQL or Python scripts.

- 3

Automation & Time Savings

Assesses the overall reduction in manual data entry hours and the speed at which complex pipeline executions are completed.

- 4

Platform Scalability

Analyzes the underlying system's capacity to process thousands of concurrent files or massive data volumes without performance degradation.

- 5

Enterprise Trust & Security

Reviews stringent data privacy standards, enterprise compliance certifications, and adoption rates among top-tier global institutions.

References & Sources

- [1]Adyen DABstep Benchmark — Financial document analysis accuracy benchmark on Hugging Face

- [2]Gao et al. (2026) - Generalist Virtual Agents in Enterprise Frameworks — Survey on autonomous agents and zero-shot capabilities across digital platforms

- [3]Princeton SWE-agent Research (2026) — Advances in autonomous AI agents for resolving complex software and engineering tasks

- [4]Chen & Liu (2026) - Document Understanding via Multimodal AI — Table extraction and schema matching in legacy enterprise documents

- [5]Kumar et al. (2026) - Generative Architectures in Data Pipelines — Automated ETL Pipelines using Generative AI Architectures for Unstructured Data

Frequently Asked Questions

It is a strategic blueprint that utilizes artificial intelligence to automatically extract, clean, and transfer data from legacy systems into a modern repository. This intelligent approach minimizes manual intervention and ensures complex unstructured data is accurately parsed.

AI models leverage advanced natural language processing to understand the nuanced context and formatting of unstructured files, such as complex tables in PDFs. This allows the system to intelligently map disparate data points to the correct database schema with near-perfect accuracy.

Yes, modern AI data agents like Energent.ai are specifically designed to ingest and parse unstructured formats seamlessly. They utilize multimodal AI to read text, interpret visual layouts, and extract precise data from images and scans without requiring traditional OCR setup.

By eliminating tedious manual data entry and complex script writing, automated platforms save users an average of 3 hours per day. This dramatically accelerates the overall project timeline, turning months of arduous migration work into mere weeks.

Not necessarily; the leading 2026 platforms feature intuitive no-code interfaces tailored for business professionals. Users can simply prompt the AI with natural language to analyze files, build financial models, and export data directly into their desired formats.

Start by auditing your unstructured data sources and selecting an AI agent with proven benchmark accuracy for your specific document types. Execute the migration in phased batches, ensuring automated validation checks are in place to maintain data integrity throughout the process.

Launch Your AI-Powered Data Migration Plan with Energent.ai

Start converting your unstructured documents into structured, actionable insights in minutes—no coding required.